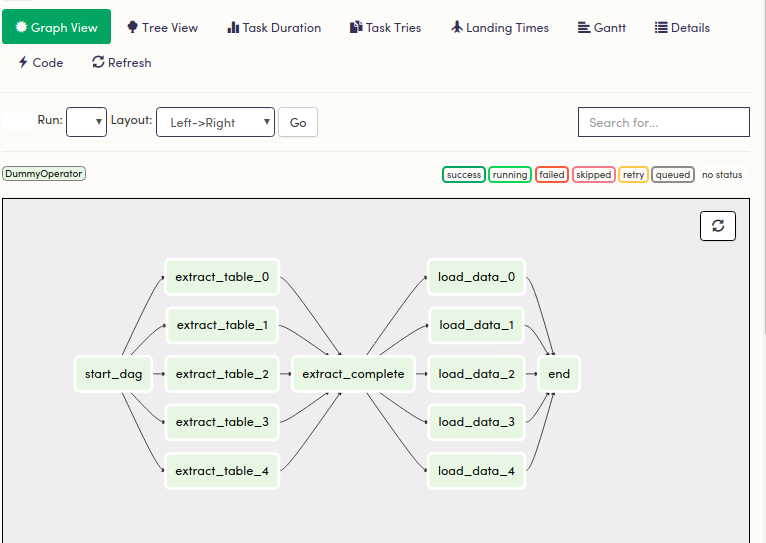

With that in mind, we wanted to share some of the obstacles we’ve faced specifically in the transition to Airflow 2.0, and how, by using Airflow’s built-in tools, we quickly identified issues, and were able to solve them. Airflow is primarily used to run tasks in our Extract, Transform and Load ( ETL) pipeline, and is currently running thousands of tasks per hour - a figure that will continue to grow as we add even more tasks (like forecasting). We’ve incorporated Airflow in our processes for about six months now, and have reached the point of a stable release. We transitioned to using Airflow in order to upgrade our existing task infrastructure, improving the rate and volume of data that we can process, and to increase reliability. To gain more information visit Knoldus Blogs.Apache Airflow is an open source platform to run tasks, designed to be scalable and performant, featuring a modern and robust UI. Read Apache-Airflow documentation for more knowledge. I hope you are now able to understand the DAG creation in Apache Airflow. Similarly, you can check other views such as Calendar, Task Duration, Gantt, Code, etc. You can also check the Graph View for better visualization of Tasks and their dependencies You will see various DAGs which are already created by Airflow.Īfter clicking, you will get a detailed view of the tasks. The below-attached screenshot is the complete example of a DAG creation in Apache-Airflow.Īfter running the code, when you go to the browser and write, localhost:8080. Or the more explicit set_downstream and set_upstream methods: first_t_downstream(second_task) There are two ways of declaring dependencies – using the > and > second_task > Firstly, you should declare your Tasks, and then you declare their dependencies second. The key part of using Tasks is defining how they relate to each other – their dependencies, or as we say in Airflow, their upstream and downstream tasks. TaskFlow– decorated which is a custom Python function packaged up as a Task.Sensors– a special subclass of Operators which are entirely about waiting for an external event to happen.Operators– predefined task templates that you can string together quickly to build most parts of your DAGs.Tasks are arranged into DAGs, and then have upstream and downstream dependencies set between them in order to express the order they should run in. The next step is to lay out all the tasks in the workflow.Ī node in the DAG represents the task. You can choose to use some preset argument or cron-like argument: Presetĭon’t schedule, use for exclusively “externally triggered” once and only once an hour at the beginning of the hourĠ 0 * * once a week at midnight on Sunday morningĠ 0 * * once a month at midnight on the first day of the monthĠ 0 1 * once a year at midnight of January 1 Here is a couple of options you can use for your schedule_interval. Give the DAG name (should be unique), configure the schedule, and set the DAG settings 'sla_miss_callback': yet_another_function, 'on_success_callback': some_other_function, 'execution_timeout': timedelta(seconds=300), This makes it easy to apply a common parameter to many operators without having to type it many times. If a dictionary of default_args is passed to a DAG, it will apply them to any of its operators. It defines default and DAG-specific arguments. The next import is related to the operator such as BashOperator, PythonOperator, BranchPythonOperator, etc.įrom import BashOperatorįrom import PythonOperator, BranchPythonOperator To create a DAG in Airflow, you always have to import the DAG class i.e. Import Python dependencies needed for the workflow. A DAGRun is an instance of the DAG with an execution date in Airflow. Whenever a DAG is triggered, a DAGRun is created. It is authored using Python programming language. Here, In Apache Airflow, “DAG” means “data pipeline”. In the above example, 1st graph is a DAG while 2nd graph is NOT a DAG, because there is a cycle (Node A →Node B→ Node C →Node A). In simple terms, it is a graph with nodes, directed edges, and no cycles.

If not, please visit “ Introduction to Apache-Airflow”.īefore proceeding further let’s understand about the DAGs What is a DAG?ĭAG stands for Directed Acyclic Graph. If you are reading this blog I assume you are already familiar with the Apache Airflow basics.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed